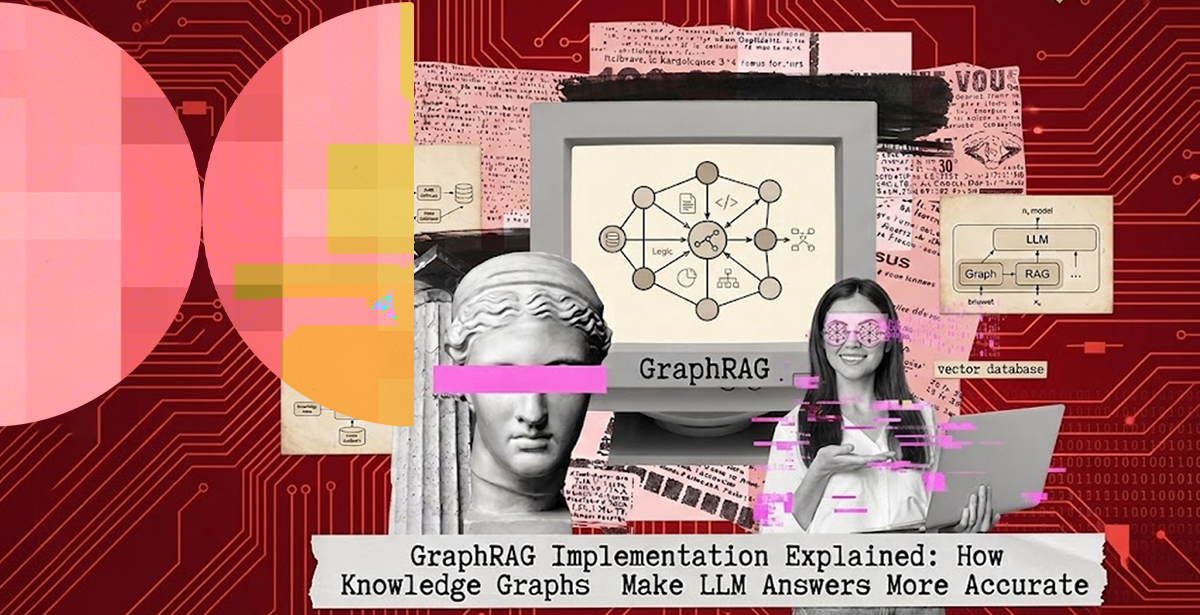

GraphRAG Implementation Explained: How Knowledge Graphs Make LLM Answers More Accurate

Large language models are powerful. But left to their own devices, they hallucinate, lose track of relationships between entities, and produce confident-sounding answers built on thin retrieval.

Retrieval augmented generation has proven to be a major step forward. Feed the model the right context at query time, and answers get significantly better. But standard vector-based RAG has a ceiling. When a question requires connecting multiple facts across a large mass of data, flat chunk retrieval often misses the relational glue.

That’s where GraphRAG implementation can help.

By grounding retrieval in a structured knowledge graph rather than a flat vector index, GraphRAG enables LLMs to reason across entities, relationships, and context . This, in turn, produces answers that are more accurate, more traceable, and genuinely more useful in production.

What is Graph Retrieval Augmented Generation?

Graph retrieval augmented generation is an architecture that combines the semantic retrieval strengths of standard RAG with the structural intelligence of knowledge graphs.

Rather than treating a document corpus as a pool of vector-embedded text chunks, GraphRAG models it as a graph, with entities as nodes and relationships as edges.

At query time, the system doesn’t just pull the most semantically similar chunks. It traverses the graph, identifies relevant entities and the relationships connecting them, then assembles a richer, structured context window for the LLM.

The result: a model that can answer complex, multi-hop questions, not just single-fact lookups.

How Structured RAG Improves LLM Accuracy

GraphRAG is essentially a specialized type of structured RAG where the structure is a knowledge graph (entities + relationships). Instead of querying rows or tables, it traverses connections between entities to retrieve context.

By implementing structured RAG, an LLM given knowledge-graph-sourced context isn’t reading isolated facts; it’s reading facts with their connections intact.

Three specific gains stand out.

- Multi-hop Reasoning – Questions requiring two or three inferential steps across a corpus are only answerable when retrieval surfaces connect knowledge.

- Reduced Hallucination – Structured RAG reduces hallucination at the source. When the model receives accurate, relationship-structured context, it has less reason to fabricate detail to fill gaps.

- Auditability – Graph-based retrieval produces traceable reasoning paths. You can inspect which nodes and edges informed an answer. This is especially critical in regulated industries like healthcare and finance.

According to a Microsoft Research, whose GraphRAG paper has driven significant enterprise adoption, community-based graph summaries consistently outperform naive RAG on global reasoning queries across large, complex corpora.

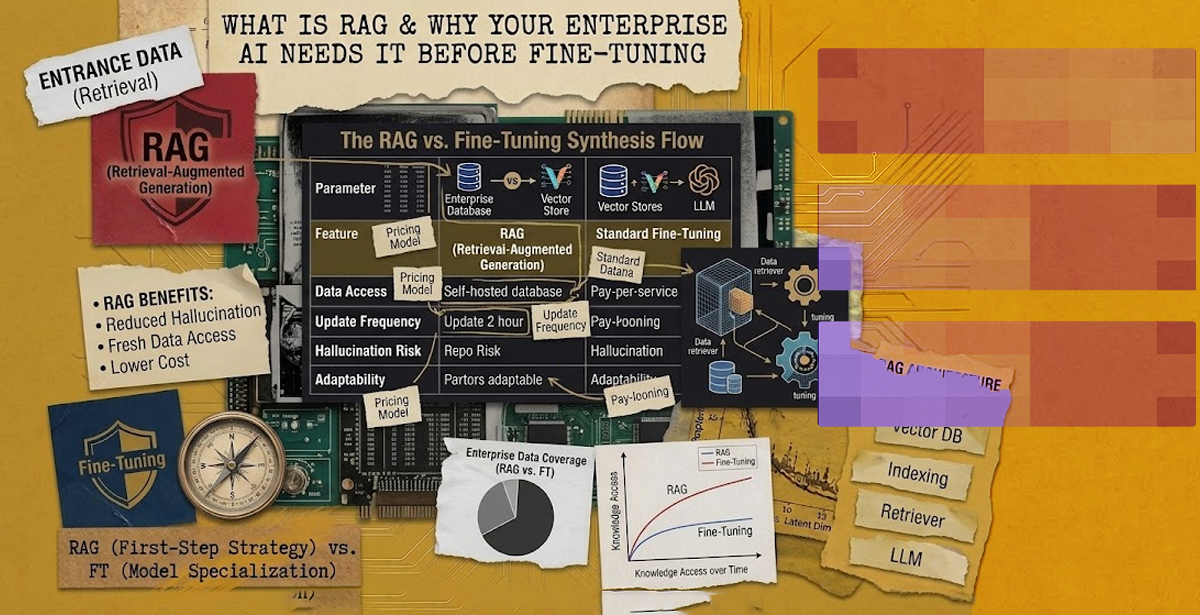

Standard RAG vs. Knowledge Graph RAG

Standard RAG and Knowledge Graph RAG solve the same core problem, i.e. grounding LLM responses in external data. However, they do it in very different ways. And the difference isn’t just technical; it affects accuracy, explainability, and the kinds of questions you can answer.

So, unless you wish to break your use case, you need to understand each to pick the right approach.

Standard RAG splits documents into chunks, embeds them as vectors, and retrieves the top-N most similar to a given query. It works well for targeted factual lookups. Howeger, it breaks down when the answer depends on reasoning across multiple entities or documents.

Knowledge graph RAG, on the other hand, solves this by adding an explicit semantic layer. Entities (e.g. people, products, regulations, clinical findings) are extracted and indexed as nodes. Their relationships become edges. When a user submits a complex query, retrieval doesn’t just surface relevant passages. It traverses the graph to surface relevant context.

That said, in practice, the choice isn’t usually one vs. the other. That’s why many mature systems tend to opt for a hybrid approach where:

- Vector search is used for broad semantic retrieval

- Knowledge graph for reasoning and precision

- LLM to synthesize the final answer

How GraphRAG Development Works

GraphRAG development isn’t just about plugging a knowledge graph into an LLM. It’s about structuring information as interconnected entities, enriching it with meaning, and enabling the model to traverse those relationships with intent.

Achieving this involves four core phases.

1) Knowledge Graph Construction

Documents are processed using NLP pipelines to extract entities and their relationships, then stored in a graph database. The right data engineering services handle this extraction and structuring layer. With their help, the graph is clean, consistent, and domain-appropriate.

2) Graph Indexing and Embedding

Nodes and edges are enriched with vector embeddings so that semantic similarity search operates in tandem with graph traversal. This hybrid layer is what makes GraphRAG more powerful than either approach alone.

3) Query-time Retrieval

At inference, the system identifies anchor entities in the query, traverses the knowledge graph to find related nodes, and assembles a structured context payload for the LLM.

4) LLM Generation

The LLM receives a relationship-aware, context-rich prompt and generates its response. Usually with significantly more signal than flat chunk retrieval provides.

Done right, GraphRAG implementation produces systems that are measurably more accurate and far less prone to hallucination.

💡Don’t choose a partner that treats GraphRAG as just RAG plus a graph database. Make sure to select AI engineering services that have strong expertise in data modeling and entity design. GraphRAG depends on well-defined relationships, not just LLM performance. Make sure to also evaluate how they handle entity resolution, schema evolution, and hybrid retrieval across graph and vector systems. If their answers are tool-focused rather than architectural, they’re likely optimizing for demos instead of scalable, reliable solutions.

Where GraphRAG Implementation Adds the Most Value

GraphRAG isn’t a universal replacement for standard retrieval augmented generation. It’s the right architecture when:

- Data has rich relational structure

- Multi-hop queries are common

- Accuracy and traceability are non-negotiable

At DPL, we deploy GraphRAG for clients in healthcare, financial services, manufacturing, and logistics. That way, clients with complex, interconnected data can get the sharpest accuracy gains when GraphRAG implementation replaces or augments their existing RAG stack.

You can see what this looks like in DPL’s AI and software project portfolio.

Think Your Organization Can Benefit from GraphRAG?

GraphRAG implementation represents a meaningful leap beyond the limitations of flat vector retrieval. It’s essential to making AI systems more accurate, more auditable, and more capable of handling the complex, relational questions that matter in enterprise environments.

If your team is evaluating GraphRAG development or exploring how structured knowledge retrieval can raise the accuracy bar for your AI applications, DPL can help.

Book a consultation with DPL’s AI engineering team and get the most from your innovative solutions.